By - M.M.Islam Imran

By - M.M.Islam Imran

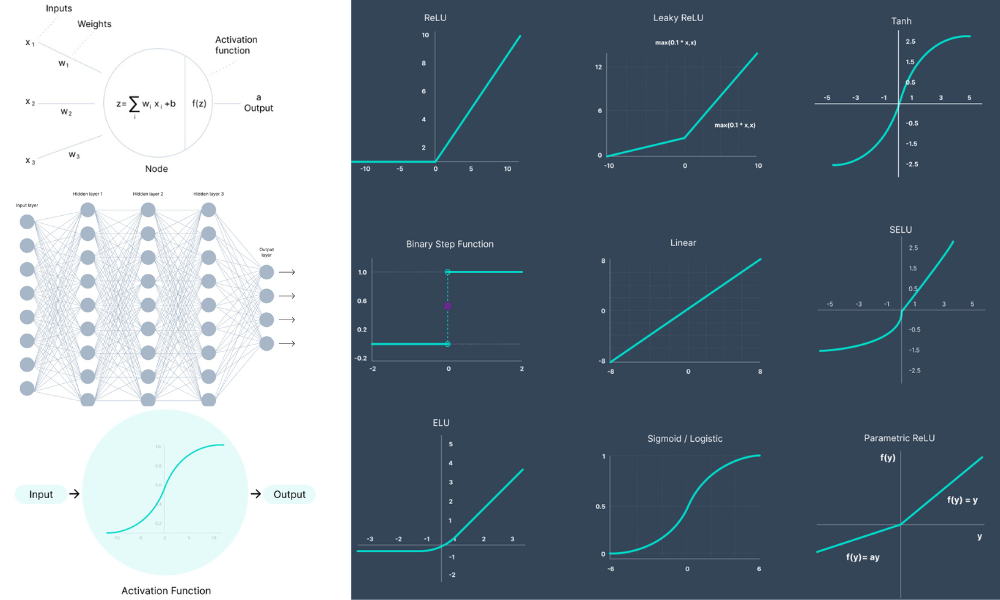

An activation function in a neural network is a mathematical operation applied to the output of each neuron in a layer. It determines whether a neuron should be activated or not based on an input signal. Activation functions introduce non-linearity into the network, allowing it to learn complex patterns and relationships in data. The primary purpose of an activation function is to introduce non-linear properties into the network, enabling it to approximate complex functions and learn from non-linear relationships in the data. Without activation functions, neural networks would reduce to linear models, severely limiting their expressive power. Commonly used activation functions include: Sigmoid: Sigmoid functions squash the input into a range between 0 and 1, making them suitable for binary classification problems where the output needs to be interpreted as probabilities. Hyperbolic Tangent (Tanh): Tanh functions squash the input into a range between -1 and 1, making them suitable for classification and regression tasks. Rectified Linear Unit (ReLU): ReLU functions output the input directly if it is positive, otherwise output zero. They are widely used in deep learning architectures due to their simplicity and computational efficiency. Leaky ReLU: Leaky ReLU functions allow a small, non-zero gradient when the input is negative, addressing the "dying ReLU" problem where neurons become inactive during training. Exponential Linear Unit (ELU): ELU functions provide a smooth alternative to ReLU, allowing negative values with a non-zero gradient and helping to alleviate the vanishing gradient problem. Activation functions play a critical role in the training process of neural networks. They influence the convergence speed, stability, and performance of the model. Choosing the appropriate activation function depends on factors such as the nature of the problem, the architecture of the network, and computational considerations.

An activation function in a neural network is a mathematical operation applied to the output of each neuron, determining whether it should be activated or not based on the input signal.

Comments (04)

-

Rashedul Alam Shakil

23 January, 2024 at 3.27 pmLorem Don't worry comment section will be dynamic. ipsum dolor sit amet, consectetur adipisicing elit. Quae fugiat nam eos iure ipsum accusamus tenetur voluptate, at quas distinctio dolores cum deserunt ab excepturi!

Replay -

Abu Noman Basar

25 January, 2024 at 5.33 pmDon't worry comment section will be dynamic.Lorem ipsum dolor sit amet consectetur adipisicing elit. Quo, vel cum pariatur nam, cupiditate accusamus deleniti quas dolorum praesentium nulla aliquam aliquid corrupti? Obcaecati, architecto.

Replay -

Mejbah Ahammad

16 January, 2024 at 12.03 pmLorem ipsum dolor sit amet consectetur adipisicing elit. Hic repellendus Don't worry comment section will be dynamic. cupiditate, dolor ducimus quod quibusdam totam ipsam tenetur distinctio eum, soluta amet quis illum debitis.

Replay -

M.M.I. Imran

24 January, 2019 at 04.27 amLorem ipsum dolor sit amet consectetur adipisicing elit. Rerum dolores asperiores esse, amet, deserunt sed excepturi a ut pariatur totam blanditiis aspernatur, eos non ad.Don't worry comment section will be dynamic.

Replay

Leave A Replay

Category

- Web Development(14)

- Web Design(07)

- Data Science(10)

- Data Analysis(12)

- Machine Learning(18)

- Deep Learning(18)